Preparing for the DevOps interview

This is a list of DevOps interview questions which include some general and abstract questions and some more technical questions which a DevOps candidate might be requested in an interview.

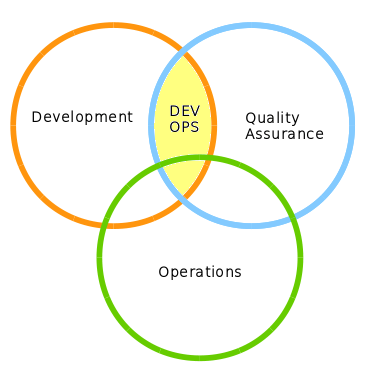

What is DevOps ?

Devops is in a nutshell a cultural movement which aims to remove through collaboration and communication unnecessary silos in an organization. In less abstract terms, Devops can be seen as a number of software development practices that enable automation and accelerate delivery of products. The last element, automation, in turn requires a programmable dynamic platform.

Which are the components of DevOps ?

Operations: which is is responsible for the infrastructure and operational environments that support application deployment, including the network infrastructure. In most cases we can say this is the Sys Admin

Devs: which is responsible for software engineering development. In most case Developers, Architects fall in this category.

Quality Assurance: which are responsible for verifying the quality of the product such as Product Testers.

Do you think Devs and Ops will radically change their working routine ?

In most cases not. Ops will still be Ops and Devs will still be Devs. The difference is, these teams need to begin working closely together.

How can you improve DevOps culture ?

- Open communication: a new culture is always created through discussions.In the Devops approach, however, the talks are focused on the product through its lifecycle rather discussing about the organization.

- Responsiblity: DevOps becomes most effective when its principles pervade all the organization rather than being limited to single roles. Everyone is accountable for building and running an application that works as expected. This turns in assigning wider responsibilities and rewards at various levels.

- Respect: As open communication is necessary so does respect which means respectful discussion and listening to other opinions and experiences

- Trust: In a perfect Devops trust is essential. Operations must trust Development they are doing their best according to the common plan. Development must trust that Quality Assurance is there to improve the quality of their work and Product Manager needs to trust that Operations is going to provide precise metrics and reports on the product deployment

Which technologies can act as driver to enable DevOps ?

- Paas: which is a category of cloud computing services that provides a platform allowing customers to develop, run, and manage applications without the complexity of building and maintaining the infrastructure

- Iaas: which is a category of cloud computing services that abstract the user from the details of infrastructure like physical computing resources, location, data partitioning, scaling, security, backup etc.

- Configuration automation: Automation is a big win in part because it eliminates the labor associated with repetitive tasks. Codifying such tasks also means documenting them and ensuring that they’re performed correctly, in a safe manner, and repeatedly across different infrastructure types.

- Microservices: which consists in a particular way of designing software applications as suites of independently deployable services.

- Containers: Containers modernize IT environments and processes, and provide a flexible foundation for implementing DevOps. At the organizational level, containers allow for appropriate ownership of the technology stack and processes, reducing hand-offs and the costly change coordination that comes with them.

What are Microservices and why they have an impact on Operations ?

Microservices are a product of software architecture and programming practices. Microservices architectures typically produce smaller, but more numerous artifacts that Operations is responsible for regularly deploying and managing. For this reason microservices have an important impact on Operations.The term that describes the responsibilities of deploying microservices is microdeployments. So, what DevOps is really about is bridging the gap between microservices and microdeployments.

Which tools are typically integrated in DevOps workflow ?

Many different types of tools are integrated into the DevOps workflow at this point. For example:

- Code repositories: like Git

- Container development tools: to convert code in a repository into a portable containerized image that includes any required dependencies

- Virtual machines software: like Vagrant for creating and configuring lightweight, reproducible, and portable development environments

- IDE: like Eclipse which has integration with DevOps platforms like Openshift

- Continuous Integration and Delivery software: like Jenkins which automates pushing the code directly to production once it has passed automated testing.

What is Continuous Integration (CI) and why is it important?

Answer: Continuous Integration is a software development practice where developers regularly merge their code changes into a central repository, and automated build and test processes are run. It allows for early detection and resolution of conflicts and issues, and helps ensure that the code remains in a releasable state at all times.

What is a containerization and how is it different from virtualization?

Answer: Containerization is a method of packaging software in which an application and its dependencies are bundled together in a container. Containers provide a consistent environment for the application to run in, regardless of the underlying infrastructure. Virtualization, on the other hand, creates a virtual version of a physical resource, such as a server or operating system. While virtualization creates a separate operating system for each virtual machine, containerization uses the host operating system and allows multiple containers to run on a single host, making it more lightweight and efficient than virtualization.

Explain the concept of a “Blue-Green Deployment”?

Answer: Blue-Green Deployment is a technique for minimizing downtime during a release by running two identical production environments, known as Blue and Green. At any given time, one of the environments is live and serving traffic, while the other environment is idle. When a new release is ready, it is first deployed to the idle environment, and then tested and validated. Once it has been verified, traffic is directed to the new environment (the green environment) and the old one is shut down, this way the traffic is not impacted during the switch.

What is the difference between DevOps and Platform Engineering ?

DevOps and Platform Engineering are both disciplines that focus on building and maintaining the infrastructure and tools that are used to develop, test, and deploy software. However, there are some key differences between the two fields.

DevOps is a methodology that aims to bring together development and operations teams in order to build and deploy software faster and more efficiently. The goal of DevOps is to automate as much of the software development process as possible, from building and testing code, to deploying and monitoring it in production. This is done by using a variety of tools such as continuous integration and delivery (CI/CD) pipelines, containerization, and infrastructure as code. DevOps teams work closely with developers and operations teams to ensure that software is delivered quickly and with high quality.

Platform engineering, on the other hand, is a discipline that focuses on building and maintaining the underlying infrastructure and tools that are used to develop, test, and deploy software. Platform engineers are responsible for building and maintaining the underlying infrastructure and tools that software developers use to build and deploy their applications. This includes things like container orchestration systems, databases, and other underlying infrastructure services. Platform engineers also work closely with developers and operations teams to ensure that the underlying infrastructure is stable, scalable, and secure.

One way to think about the difference between DevOps and platform engineering is that DevOps is focused on the “people” aspect of software development, while platform engineering is focused on the “technology” aspect. DevOps focuses on bringing development and operations teams together, while platform engineering focuses on building and maintaining the underlying infrastructure and tools.

Another key difference is in the scope of responsibilities, DevOps teams are responsible for the complete software development lifecycle including continuous integration and continuous delivery of the software. They are responsible for the building and deploying code, while the platform engineers are responsible for the underlying infrastructure and tools, not necessarily being involved in code level.

Both disciplines are essential for building and deploying software quickly and efficiently. DevOps teams focus on the processes and people that are needed to build and deploy software, while platform engineers focus on the underlying infrastructure and tools that are used to build and deploy software. Together, these two disciplines work to ensure that software is delivered quickly and with high quality.

What is Automation ?

Automation is the process of removing manual, error-prone operations from your services, ensuring that your applications or services can be repeatedly deployed.

Automation is a key point of DevOps, however what is the prerequisite of it ?

The necessary prerequisite of it is standardization. Which means both a:

- Technical standardization:choose standard Operating systems and middleware, develop with a standard set of common libraries

- Process standardization: standard systems development life cycle, release management, monitoring and escalation management.

At which level can applied automation in DevOps ?

At three levels:

1) Automate the application lifecycle: in terms of software features, version control, build management, integration frameworks

2) Automate the middleware platform automation: such as installing middleware, autoscaling and resources optimization of middlware components

3) At infrastructure by provisioning operating system resources and virtualizing them

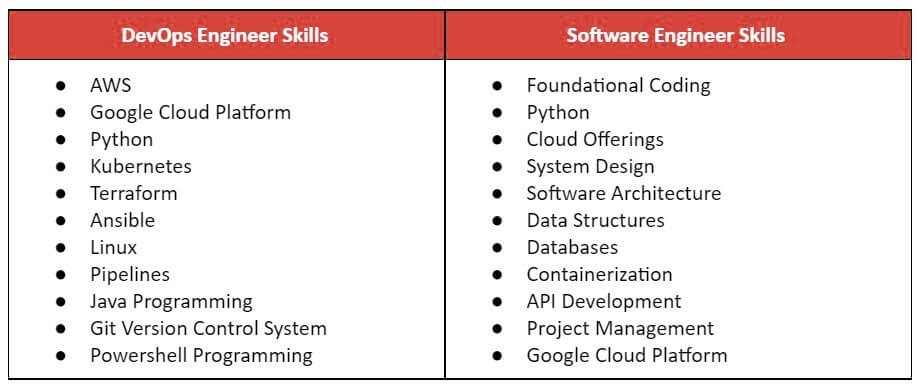

Which scripting language is most important for a DevOps engineer?

Software development and Operational automation requires programming. In terms of scripting

Bash is the most frequently used Unix shell which should be your first automation choice. It has a simple syntax, and is designed specifically to execute programs in a non-interactive manner. The same stands for Perl which owes great deal of its popularity to being very good at manipulating text and storing data in databases.

Next, if you are using Puppet or Chef it’s worth learning Ruby which is relatively easy to learn, and so many of the automation tools have been specifically with it.

Java has a huge impact in IT backend, although it has a limited spread across Operations.

Explain how DevOps is helpful to developers?

DevOps brings faster and more frequent release cycles which allows developers to identify and resolve issues immediately as well as implementing new features quickly.

Since DevOps is what makes people do better work by making them wear different hats, Developers who collaborate with Operations will create software that is easier to operate, more reliable, and ultimately better for the business.

How Database fits in a DevOps ?

In a perfect DevOps world, the DBA is an integral part of both Development and Operations teams and database changes should be as simple as code changes. So, you should be able to version and automate your Database scripts as your application code. In terms of choices between RDBMS, noSQL or other kind of storage solutions a good database design means less changes to your schema of Data and more efficient testing and service virtualization. Treating database management as an afterthought and not choosing the right database during early stages of the software development lifecycle can prevent successful adoption of the true DevOps movement.

How would you approach monitoring and troubleshooting a distributed system?

Monitoring and troubleshooting a distributed system can be challenging due to the complexity of the underlying infrastructure. One approach is to use monitoring tools that are specifically designed for distributed systems, such as Prometheus or Datadog, to collect and visualize metrics from all the different components of the system. Additionally, setting up logging and tracing systems, such as Elasticsearch, Logstash, and Kibana (ELK) or OpenTracing, can help in identifying the source of errors and issues. Another useful technique is to use Chaos Engineering, for example Gremlin, to proactively test the system’s resilience and identify potential issues.

How do you manage secrets and secure sensitive data in a DevOps pipeline?

Managing secrets and secure sensitive data in a DevOps pipeline is a critical task that requires careful consideration. One approach is to use a dedicated secrets management tool, such as Hashicorp Vault, AWS Secrets Manager, or Google Cloud KMS, to securely store and encrypt sensitive information. Access to the secrets should be strictly controlled and logged, and secrets should be rotated regularly. Additionally, it is important to ensure that sensitive data is not stored in plaintext in version control systems or configuration files. Instead, the data should be encrypted and decrypted as needed using a secure encryption tool.

It’s also a best practice to limit the exposure of secrets to the minimum, ie. only the services that need them should have access and use them.

Keep tuned, we will keep updating the DevOps interview questions!

References: https://www.redhat.com/en/insights/devops

Found the article helpful? if so please follow us on Socials